Every developer working with MySQL understands how crucial RDBMS is to their projects. The database management system can support all kinds of projects, from gaming forums to healthcare solutions. According to research by DatabaseJournal, it occupies nearly half, 44 percent, of the database market share.

Improving MySQL performance is also something every DBA struggles with at some point in their career. Don’t worry though, we’re here to help. In this article, we’ll look at five ways to quickly improve the performance of your MySQL database. Start!

MySQL and its applications Improve

- overall database

- 1: Manage the my.cnf file

- Storage Engines and Schema Design

- #5: Indexes and Partitions

- Advanced Operations: Tips and Tricks MySQL and

- its applications

performance #

#2 and #3: Check MySQL

#4 and

Before you try to improve the performance of your MySQL application, one of the first things to consider is the infrastructure that supports your application. No system has been improved without first understanding what it is based on. For this reason, we need to take a step back and verify the server that supports MySQL.

First we’ll want to check two basic things, starting with the amount of memory installed on the server. You can observe this by issuing a free command. Second, you can issue the df” command to observe the amount of hard disk space on the server.

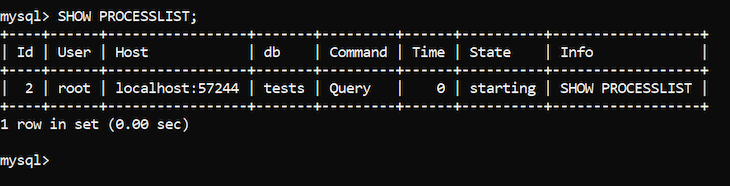

Considering these things, you can connect to MySQL. First, you’ll want to check if unnecessary queries are running by using the SHOW PROCESSLIST command. You should receive output such as the following:

If you see long-running

queries that you don’t recognize, it’s a good idea to terminate them; a long-running query can be a hindrance to other queries. However, you will most likely not see any suspicious queries. Even if you do, terminating one or two slow queries will not quickly improve database performance.

To speed up your queries, you need to understand how queries and MySQL in general work.

Improve overall database performance Before we walk you through the specific measures that help improve

MySQL database performance, you need to understand the basics

of database performance. When it comes to improving

database

performance,

people often talk about improving the performance of CRUD, Create, Read, Update, and Delete queries. In MySQL, these queries span INSERT, SELECT, UPDATE, and DELETE queries.

All queries within MySQL are supported by the configuration defined in a central MySQL-related file, my.cnf. All settings defined in my.cnf have a direct impact on query performance.

Generally, you can improve the performance of the INSERT query by removing indexes from the table into which the data is inserted. The more indexes there are in a specific table, the harder it is for INSERT to continue.

To improve the performance of SELECT queries, we typically use indexes. To improve the performance of UPDATE queries, we perform updates in batches, which means we perform many smaller updates instead of one large update.

To improve the performance of the DELETE query, we changed the DELETE query to TRUNCATE. TRUNCATE deletes all rows from a table. Such a query is generally much faster than deleting rows using DELETE because TRUNCATE provides MySQL with less overhead.

The tips given above will certainly be a good starting point when it comes to understanding why a MySQL-based database misbehaves in the performance realm.

However, to

understand the reasons behind the assumptions given above, we will have to dig deeper. I recommend backing up your database and then coming back to this blog. Now, we’ll review five ways that will help you quickly improve your database performance.

#1: Managing

the my.cnf File

When trying to improve MySQL query performance, one of the first things to consider would be the my.cnf file, which contains all the parameters needed for MySQL to work.

If you are using Linux, you can find the my.cnf file in one of the following directories: /var/lib/mysql/my.cnf /etc/my.cnf /etc/mysql/my.cnf /

- usr/etc/my.cnf

If you are using Windows, you can find the file in the /bin/

mysql/mysql *.*.* directory. mysql *.” *

.*”* refers to your version of MySQL. Open the file and find

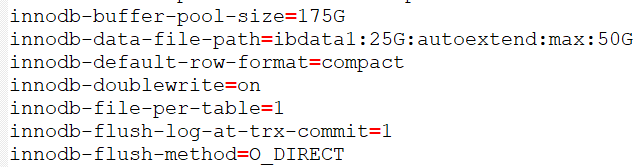

the parameters surrounding InnoDB:

All these parameters are related to one of the main storage engines within MySQL, InnoDB. You can use other storage engines, but since InnoDB is the default storage engine offered by MySQL, we suggest you do so.

my.cnf parameters

Let’s review the parameters. The innodb-buffer-pool-size parameter defines the size of the buffer pool, which is used to cache data related to InnoDB tables. The innodb-data-file-path parameter specifies the path where the ibdata1 file is stored. ibdata1 is the main file related to InnoDB, storing all the necessary data.

innodb-default-row-format specifies the row format within InnoDB tables. These can be fixed or dynamic. innodb-doublewrite specifies whether the double write mechanism within InnoDB is enabled or not.

innodb-flush-log-at-trx-commit specifies how data is flushed into log files when transactions are committed and completed. The innodb-flush-method parameter defines the method used to flush data into log files.

Configuring my.cnf parameters

Remember how you discovered the amount of RAM and hard disk space available within your infrastructure? Now it’s time to use those details to get the best possible performance. We will set the parameters as follows.

The innodb-buffer-pool-size parameter should be set to 50 to 60 percent of the available RAM. The larger it is, the more data will be cached and therefore the faster data insertion.

Increase the size of the innodb-data-file-path variable so that it can accommodate all data within MySQL. It is recommended to set the parameter to 5-10 GB.

If the parameter is not present, include an innodb-file-per-table parameter and set it to one. The innodb-file-per-table parameter will help MySQL understand that it needs to store all tables as separate files, which makes the buffer pool size significantly smaller. The buffer pool will contain only metadata.

We recommend leaving the innodb-flush-log-at-trx-commit parameter at its default value. The default ensures ACID compliance, but if you want faster write performance, you can also consider changing the value to 0 or 2. Note that ACID, the properties that guarantee data integrity, will be exchanged as a result.

Leave the washing method as is. The flushing method O_DIRECT ensures faster performance when importing data because the Linux kernel bypasses the operating system cache.

Performing the steps specified above will ensure faster performance even if your server has a limited amount of RAM and storage space.

#2 and #3: Check

MySQL Storage Engines and Schema Design

In addition to playing around with the my.cnf file, we also need to examine the storage engines we use and the way they are designed. If you are using MySQL, use InnoDB. If you are using Percona Server, use Percona XtraDB.

InnoDB

Parameters

At the time of writing, InnoDB is the only storage engine that supports ACID properties. These properties ensure data integrity even in the event of power outages or any similar interruption. As mentioned above, ACID can be changed by speed by setting the innodb-flush-log-at-trx-commit parameter to 0 or 2.

InnoDB provides several parameters that you can use to quickly improve the performance of queries and other operations, including innodb-buffer-pool-size and innodb-log-file-size.

Set the buffer pool size to

60 percent of the available RAM within the infrastructure and the log file size to about one-quarter of the value assigned to the buffer pool. Log files are parsed when MySQL is restoring data within InnoDB. The larger the size, the faster the speed of the restoration process.

Both InnoDB and XtraDB support row-level locking. In simple terms, row-level locking refers only to blocking access to rows that are directly affected by a transaction. Compared with table-level locking, it has a significant advantage; Developers can still work with rows when refreshing data.

If your use case does not require this approach, you should avoid using any storage engine other than InnoDB. MyISAM is not reliable, and other storage engines should be used only in specific cases. For more information, see the MySQL documentation.

If the data you are working with exceeds 10 million rows, all tables are normalized.

Indexing

queries

At least some of the columns in the tables on which SELECT queries run are indexed. For best results, index all columns after the WHERE clause or the first clause to save space. This type of approach will improve the performance of queries that read data because indexes will let MySQL know how to find columns with specific values quickly.

Data

types and

integers It is important that you know the data types and character sets. To take up less disk space, you should use the CHAR (character) or VARCHAR (variable character) data types instead of the TEXT-CHAR and VARCHAR data types. It’s the same with integers; consider using SMALLINT instead of INT if necessary to save hard disk space.

Correctly specify the length of the data types. Consider specifying a size of, say, 50, rather than 255, the maximum value, when it comes to big data. Such an approach will save massive amounts of disk space.

Make sure that the tables do not store any data that is not needed. The less data you store, the less data you have to read and update.

#4 and #5: Indexes and Partitions

In addition to the factors described above, indexes and partitions are also immensely important. Indexes help us find rows with specific values quickly, while partitions act as tables within tables to further improve performance.

Both approaches have a cost in INSERT, UPDATE, and DELETE queries, because the data that is inserted or updated must be updated within the index or partition itself.

However, both approaches also have a silver lining; both speed up reading operations. Partitions make SELECT queries faster because they can split tables into smaller tables that start with a certain character, only by running queries through them. On the other hand, the job of an index is to make SELECT queries with a WHERE clause faster.

Best practices for

indexes

Both indexes and partitions have several different types. For the purposes of this article, we won’t discuss them all, but for indexes, note the following.

The most common type of index, B-tree indexes, are useful when used in conjunction with queries with operators that contain an equal sign =.

Coverage ratios cover all columns used by a specific query. For example, a coverage index in columns a1, a2, and a3 would satisfy the following query:

SELECT * FROM demo WHERE a1 = ‘Demo’ AND a2 = ‘Demo 2’ AND a3 = ‘Demo 3’;

Hash indexes only work on specific storage engines and specific search operators within MySQL, including = and <=>.

Partitions in MySQL

For partitions, keep in mind that they also come in multiple flavors

.

The partition by RANGE allows us to divide the values that fall within a certain range. This type of partition is particularly useful when dividing large tables by character or number.

Hash partitioning divides the table into multiple tables according to a number of columns. For example, PARTITION BY HASH(id) PARTITIONS 8; You would split the table into several different database-level tables with eight partitions in total.

All types of partitioning can be found in the MySQL documentation. The partition is usually defined when you create a table and in many cases looks like this:

CREATE TABLE table_name ( [column_details] ) [partitioning_details]; The partition by range, for example, would look like this: CREATE TABLE table_name ( ‘demo_column’ VARCHAR(255) NOT NULL DEFAULT ” ) PARTITION BY RANGE (column) ( PARTITION P1 VALUES LESS THAN (100), PARTITION P2 VALUES LESS THAN (200) );

Other types of partitioning look very similar to the partitioning defined above. However, partitioning by RANGE is replaced by LIST, HASH, or other types.

Partitioning also has another very important advantage. It allows users to delete all data stored on a single partition; ALTER TABLE demo TRUNCATE PARTITION will partition_name do the trick.

Advanced Operations: Tips and Tricks

Both indexing and partitioning will greatly help improve read operations, but there are a couple of additional things we need to keep in mind

.

COUNT(*) queries are only fast when using the MyISAM storage engine. Faster COUNT(*) queries are the only advantage of the MyISAM storage engine, as it stores the row count within its metadata. No other storage engine does that.

For faster SELECT queries with wildcards, use wildcards only at the end of the search query. The queries should resemble the following code; Note that there is no wildcard sign at the beginning of the string:

SELECT * FROM demo_table WHERE column LIKE ‘string%’;

The wildcards at the beginning of the chain tell MySQL to look for any % at first, which can slow down the query.

UNIQUE indexes help us ensure that each entry within a column is unique. If that’s not the case, MySQL will be wrong.

The IGNORE keyword is useful if we want to ignore errors when inserting data or performing other operations. Simply specify IGNORE within the statement, then proceed as usual: INSERT IGNORE

IN demo_table VALUES (c1) (‘Demo’);

LOAD DATA INTO FILE and SELECT … INTO OUTFILE is significantly faster than issuing INSERT queries and backing up data on a regular basis. These queries avoid much of the overhead that exists when INSERT queries are executed. See the MySQL documentation for more information.

Older versions of MySQL can’t deal with FULLTEXT indexes on larger datasets when we look for anything with an @ sign. That’s a bug within MySQL, BUG#104263. This approach causes the consultation to time out.

Avoid issuing ALTER queries on tables that run large data sets. Because of how ALTER works internally, it forces MySQL to create a new table, then inserts the data into it, makes the necessary changes, and swaps the original table with the copy. When it comes to large data sets, this approach usually takes a lot of time, so keep that in mind.

Sometimes, it is useful to use the DEFAULT keyword to set default values on many rows at once. Imagine creating a table and then inserting a billion rows into it. When the DEFAULT keyword is used, the rows will be pre-populated with a specific keyword, thus avoiding the need for potentially problematic ALTER queries as described above. Define a column as follows:

‘column_name’ VARCHAR(255) NOT NULL DEFAULT ‘value’;

Hopefully, the tips in this article will help you improve the performance of your MySQL databases. However, as with everything, keep in mind that there are disadvantages.

The disadvantages of each approach

Improving MySQL performance using the methods described above can have the following drawbacks

.

Checking my.cnf requires some knowledge of the internal components of Linux and, in many cases, a fairly strong server. You can’t improve performance much if your RAM is limited to 256MB or if you only have 2GB of disk space in total.

Knowing my.cnf and storage engines and modifying their configuration usually requires a deep knowledge of the MySQL space. One needs to know exactly what each parameter that is modified does, what their appropriate values are, and more.

Windows users have

it easy as my.ini, an equivalent of my.cnf, provides them with a lot of feedback within itself, but Linux users usually have to define many settings themselves.

The main disadvantage of data types and character sets is the fact that each character requires disk space, And some character sets have different requirements on storage space. Four bytes per character or eight bytes per character certainly make a difference if we’re dealing with large data sets, so that’s something to think about as well. See the MySQL documentation for more information.

Indexes and partitions

typically speed up SELECT operations at the expense of slowing down everything else, including INSERT, UPDATE, and DELETE, since all those queries also have to insert, update, or delete data in indexes and partitions

.

Conclusion

In this article, we’ve discussed five ways you can quickly improve the performance of your MySQL database. Each approach has its own unique advantages and disadvantages and is applicable in different scenarios. However, whether or not the pros outweigh the cons is for you to decide.

Familiarize yourself with the documentation surrounding the storage engine of your choice. Use InnoDB or XtraDB as your storage engine, do your best to normalize the tables you’re working with, avoid using unnecessary sizes for your data types, and index your columns to speed up SELECT queries.

Indexes and partitions are used to speed up SELECT queries at the expense of slowing down INSERT, UPDATE, and DELETE. Both approaches have multiple types and can be incredibly useful if used wisely.

As always, before

attempting to improve the performance of your MySQL application using one or more of the ways described in this article, be sure to evaluate all available options, perform backups before testing anything, and test all modifications in an on-premises environment first. Make modifications wisely, and always keep in mind that a performance increase in one place probably means a decrease in performance in another place.

I hope you liked this article. Be sure to leave a comment if you have any questions, and happy coding.