Introduction

HAProxy, which stands for High Availability Proxy, is a popular open source TCP/HTTP Load Balancer software and proxy solution that can run on Linux, macOS, and FreeBSD. Its most common use is to improve the performance and reliability of a server environment by distributing the workload across multiple servers (e.g., web, application, database). It is used in many high-profile environments, including: GitHub, Imgur, Instagram, and Twitter.

In this guide, you’ll get an overview of what HAProxy is, review load balancing terminology, and examples of how it could be used to improve the performance and reliability of your own server environment.

HAProxy Terminology

There are many terms and concepts that are important when talking about load balancing and proxy. You will review the terms in common use in the following subsections.

Before we get into the basic types of load balancing, you should start with a review of ACLs, backends, and frontends.

Access Control List

(ACL)

In relation to load balancing, ACLs are used to test some condition and perform an action (for example, selecting a server or blocking a request) based on the result of the test. Using ACLs allows flexible network traffic forwarding based on a variety of factors such as pattern matching and the number of connections to a backend, for example.

Example of a

ACL: acl url_blog path_beg /blog

This ACL matches if the path of a user’s request begins with /blog. This would coincide with a request for http://yourdomain.com/blog/blog-entry-1, for example.

For detailed guidance on using ACLs, refer to the HAProxy Setup Guide.

Backend

A backend is a set of servers that receives forwarded requests. Backends are defined in the backend section of the HAProxy configuration. In its most basic form, a backend can be defined by:

- which load balancing algorithm to use

- a list of servers and ports A

backend can contain one or more servers on it. Generally speaking, adding more servers to your backend will increase your potential load capacity by spreading the load across multiple servers. Greater reliability is also achieved this way, in case some of your backend servers are unavailable.

Here is an example of a configuration of two backends, web-backend and blog-backend with two web servers on each, listening on port 80:

backend web-backend balance roundrobin server web1 web1.yourdomain.com:80 check server web2 web2.yourdomain.com:80 check backend blog-backend balance roundrobin mode http server blog1 blog1.yourdomain.com:80 check server blog1 blog1.yourdomain.com:80 check

The balancing roundrobin line specifies the load balancing algorithm, which is detailed in the Load Balancing Algorithms section.

mode HTTP specifies that the layer 7 proxy will be used, which is explained in the Load Balancing Types section.

The verification option at the end of server policies specifies that health checks should be performed on those back-end servers.

Frontend

A frontend defines how requests should be forwarded to backends. Frontends are defined in the frontend section of the HAProxy configuration. Its definitions consist of the following components

: a

- set of IP addresses and a port (for example, 10.1.1.7:80, *:443

- ACLs use_backend

- define which backends to use depending on which ACL conditions match, and/or a default_backend rule that handles any other cases A

, and so on)

rules, which

frontend can be configured for various types of network traffic, as explained in the next section.

Load balancing types

Now that you understand the basic components that are used in load balancing, you can move on to the basic load balancing types

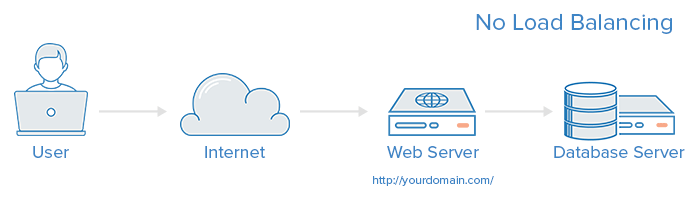

. No load balancing

A simple web application environment without load balancing might look like this:

In this example, the user connects directly to your web server, on yourdomain.com and there is no load balancing. If your only web server goes down, the user will no longer be able to access your web server. Also, if many users try to access your server simultaneously and you can’t handle the load, they may have a slow experience or they may not be able to connect at all.

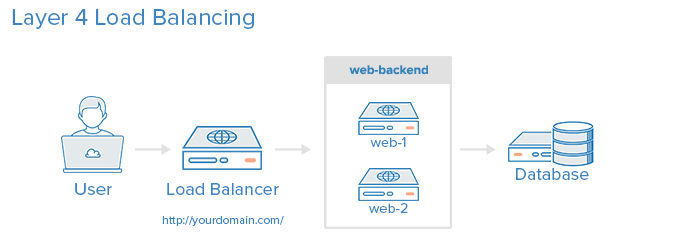

Layer

4 load balancing

The easiest way to load balance network traffic to multiple servers is to use Layer 4 load balancing (transport layer). Load balancing in this way will forward user traffic based on IP range and port (i.e. if a request arrives for http://yourdomain.com/anything, traffic will be forwarded to the backend that handles all yourdomain.com requests on port 80). For more details on Layer 4, see the TCP subsection of our Networking Overview.

Here is a diagram of a simple example of

layer 4 load balancing:

The user accesses the load balancer, which forwards the user’s request to the backend web backend pool. Whichever back-end server is selected will respond directly to the user’s request. In general, all servers in the web backend should serve identical content; otherwise, the user could receive inconsistent content. Note that both Web servers connect to the same database server.

Layer

7 load balancing

Another more complex way to load balance network traffic is to use layer 7 (application layer) load balancing. Using layer 7 allows the load balancer to forward requests to different back-end servers based on the content of the user’s request. This load balancing mode allows you to run multiple web application servers under the same domain and port. For more details on layer 7, see the HTTP subsection of our Introduction to Networking.

Here

is a diagram of a simple example of layer 7 load balancing: <img src="https://assets.digitalocean.com/articles/HAProxy/layer_7_load_balancing.png" alt="layer 7 load

In this example, if a user requests yourdomain.com/blog, it is forwarded to the blog backend, which is a set of servers running a blog application. Other requests are forwarded to the web backend, which might be running another application. Both backends use the same database server, in this example.

A snippet of the example frontend configuration

would look like this

: frontend http bind *:80 mode http acl url_blog path_beg /blog use_backend blog-backend if url_blog default_backend web-backend

This sets up a frontend called http, which handles all incoming traffic on port 80

. ACL url_blog path_beg /blog matches a request if

the path of the user’s request begins with /

blog. use_backend blog-backend

if the ACL url_blog used to proxy traffic to blog-backend

. default_backend web backend

specifies that all other traffic will be forwarded to the web backend.

Load

balancing algorithms The load balancing algorithm that is used determines which server, in a backend, will be selected when load balancing. HAProxy offers several options for algorithms. In addition to the load balancing algorithm, servers can be assigned a weight parameter to manipulate how often the server is selected, compared to other servers.

Some of the commonly used algorithms are as follows:

roundrobin

Round Robin selects servers in turn. This is the default algorithm.

leastconn

Selects the server with the fewest connections. This is recommended for longer sessions. Servers in the same backend are also rotated in a round shape.

source

This selects which server to use based on a hash of the source IP address from which users make requests. This method ensures that the same users will connect to the same servers.

Fixed sessions

Some applications require a user to continue connecting to the same backend server. This can be achieved through fixed sessions, using the appsession parameter in the backend that requires it.

Health Check

HAProxy uses health checks to determine if a back-end server is available to process requests. This avoids having to manually delete a server from the backend if it is unavailable. The default health check is to attempt to establish a TCP connection to the server.

If a server fails a health check and therefore cannot serve requests, it is automatically disabled on the back end and traffic will not be forwarded to it until it is healthy again. If all servers in a back-end fail, the service will become unavailable until at least one of those back-end servers is healthy again.

For certain types of backends, such as database servers, the default health check is not necessarily to determine whether a server is still healthy.

The Nginx web server can also be used as a standalone proxy server or load balancer, and is often used in conjunction with HAProxy for its caching and compression capabilities.

High availability

The Layer 4 and 7 load balancing configurations described in this tutorial use a load balancer to route traffic to one of many backend servers. However, the load balancer is a single point of failure in these configurations; If it stops working or is overwhelmed by requests, it can cause high latency or downtime for your service.

A high availability (HA) configuration is broadly defined as infrastructure without a single point of failure. It prevents a single server failure from being a downtime event by adding redundancy to every layer of your architecture. A load balancer facilitates redundancy for the backend layer (web/application servers), but for a true high availability configuration, you must also have redundant load balancers.

Here is a diagram of a high availability configuration:

In this example, you have multiple load balancers (one active and one or more passive) behind a static IP address that can be reassigned from one server to another. When a user accesses your website, the request passes through the external IP address to the active load balancer. If that load balancer fails, the failover mechanism will detect it and automatically reassign the IP address to one of the passive servers. There are several different ways to implement an active/passive high availability configuration. For more information, read How to use reserved IPs.

Conclusion

Now that you understand load balancing and know how to use HAProxy, you have a solid foundation to start improving the performance and reliability of your own server environment.

If you are interested in storing HAProxy output for later viewing, see How to Configure HAProxy Logging with Rsyslog on CentOS 8 [Quickstart]

If you’re looking to resolve a problem, see Common HAProxy Errors. If even more troubleshooting is needed, take a look at How to Fix Common HAProxy Errors.