Redis is an open-source in-memory data store that is used as a database, cache, and even message broker. Redis can be easily used with docker and docker-compose for local development as a cache for a web application. In this post, we will configure Redis with docker and docker-compose, where Redis will be used as a cache for a REST API .js Node.js/Express with PostgreSQL as the main database, let’s get started!

Table of Contents

#Requisitos Redis and Docker Redis

- with Docker-compose

- Add Redis to an existing node.js

- application Conclusion

- Prerequisites

#

Before we start looking at the code, here are some good conditions to have preconditions:

- A general understanding of how Docker works would be advantageous.

- You are expected to have followed the Node.js Postgres with quotation mark API tutorial.

- Going through the publication of Node.js Redis would be very beneficial.

- Any working knowledge of Redis, its command line and some basic commands like KEYS, MGET would be helpful.

Since it is mentioned, we can now proceed to run Redis only with Docker first.

Redis and Docker #

Redis, as

mentioned, can also be used as a cache. For this post, we’ll use Redis as an in-memory cache instead of getting the data from a Postgres database. To do this, we will use the official Redis docker image from Dockerhub. To run Redis version 6.2 in an Alpine container we

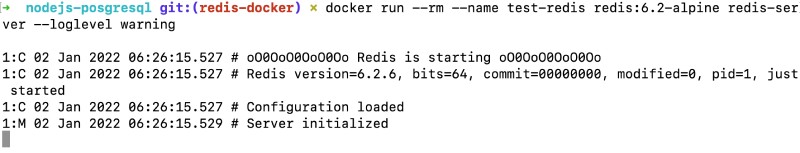

will run the following command:d ocker run -rm -name test-redis redis:6.2-alpine redis-server -loglevel warning

The above command will start a container called test-redis for the given image version of 6.2-alpine’ with the warning loglevel. It will give the following output:

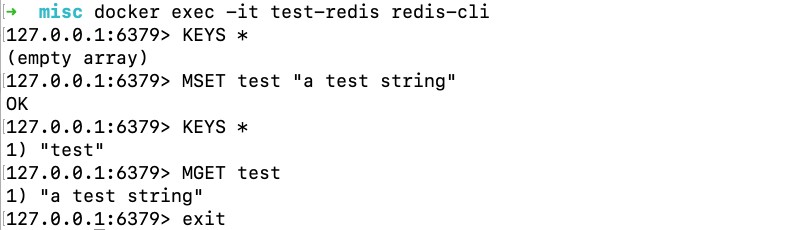

To execute some Redis commands inside the container, we can run docker exec -it test-redis redis-cli that will execute the redis-cli in the running container. We can try some redis commands like the following to see that things are working:

As seen above, we could set some value with the key proof and retrieve it. Since there are no volumes set or commands given to persist, keys and values will be lost when the container is stopped. If you’re looking for a relational database with Docker, try this tutorial on PostgreSQL and Docker. Next, we will see how to run the same version of Redis with docker-compose.

Redis with Docker-compose #

To run Redis with Docker-compose including persistence and authentication we will use the docker-compose file called docker-compose-redis-only.yml as seen below

:version: ‘3.8’services: cache: image: redis:6.2-alpine restart: always ports: – ‘6379:6379’

command

: redis-server -save 20 1 -loglevel warning -requirepass eYVX7EwVmmxKPCDmwMtyKVge8oLd2t81 volumes: – cache:/datavolumes: cache: driver: local

Here, in the docker-compose file above, we have defined a service called cache. The cache service will pull the redis:6.2.alpine image from Dockerhub. It is set to always restart, if the docker container fails for any reason, it will restart. Then, we map container port 6379 to local port 6379. If our goal is to run multiple versions of Redis, we can choose a random port.

Consequently, we use a custom redis-server command with -save 20 1 that instructs the server to save 1 or more writes every 20 seconds to the disk in case the server restarts. We are using the -requirepass parameter to add password authentication to read/write data to the Redis server. As we know, if it was a production-level application, the password will not be exposed. This is being done here because it is only intended for development purposes.

Subsequently, we use a volume for the /data where the writes will be preserved. It is assigned to a volume called a cache. This volume is managed as a local controller, you can read more about the Docker volume controller if you want.

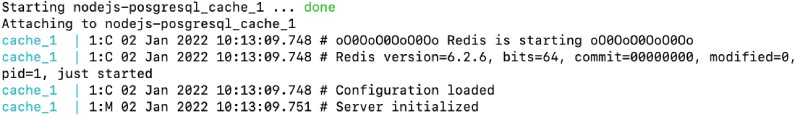

If we run a docker-compose up with the previous file using docker-compose –

f docker-compose-redis-only.yml up will give an output like the following:

This container runs similarly to the previous one. The two main differences here are that the volume is being mounted to preserve data saved during container reboots and the password is provided for authentication. In the next section, we’ll add Redis to an existing application that has a PostgreSQL database and a Node API.js using that database.

Add Redis to

an existing Node.js application #

As an example for this guide, we are using the Quotes API application built with Node.js and Postgres. We will introduce the Redis service in the existing docker-compose file as follows

:version: ‘3.8’Services: DB: Image: Postgres:14.1-Alpine Restart: Always Environment: – POSTGRES_USER=Postgres – POSTGRES_PASSWORD=Postgres ports: – volumes ‘5432:5432’: – db:/var/lib/postgresql/data – ./db/init.sql:/docker-entrypoint-initdb.d/create_tables.sql cache: Image: Redis:6.2-Alpine Reboot: Always ports: – ‘6379:6379’ command: redis-server -save 20 1 -loglevel warning – requirepass eYVX7EwVmmxKPCDmwMtyKVge8oLd2t81 volumes: – cache:/data api: container_name: quotes-api build: context: ./ target: production image: quotes-api depends_on: – db – cache ports: – environment 3000:3000: NODE_ENV: production DB_HOST: db DB_PORT: 5432 DB_USER: Postgres DB_PASSWORD: Postgres DB_NAME: Postgres REDIS_HOST: Cache REDIS_PORT: 6379 REDIS_PASSWORD: eYVX7EwVmmxKPCDmwMtyKVge8oLd2t81 links: – db – cache volumes: – ./:/srcvolumes: db: driver: local cache: driver: local

This file is similar to the docker-compose file above. The main changes here are that the api service now also depends on the caching service that is our Redis server. On top of that, in the API service, we’re passing Redis-related credentials as additional environment variables like REDIS_HOST, REDIS_PORT, and REDIS_PASSWORD. These parts have been highlighted in the previous file.

When we do a regular docker-compose with this docker-compose.yml file, it will produce output similar to the following:<img src="https://geshan.com.np/images/redis-docker/05redis-docker-compose-up.jpg" alt="Run Redis

with docker-compose

including Node.js and Postgres – output” />

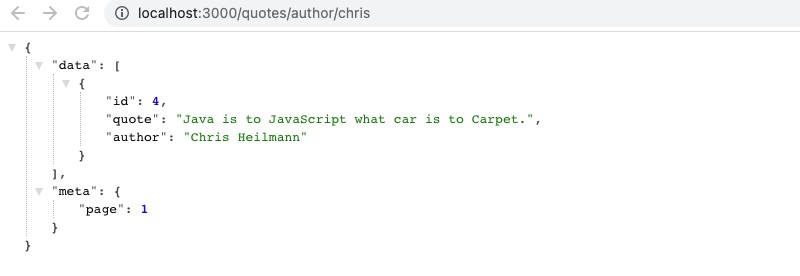

Depending on whether the Postgres container has data, it will behave a little differently. Now, if we press http://localhost:3000/quotes/author/chris it will show

the following output:

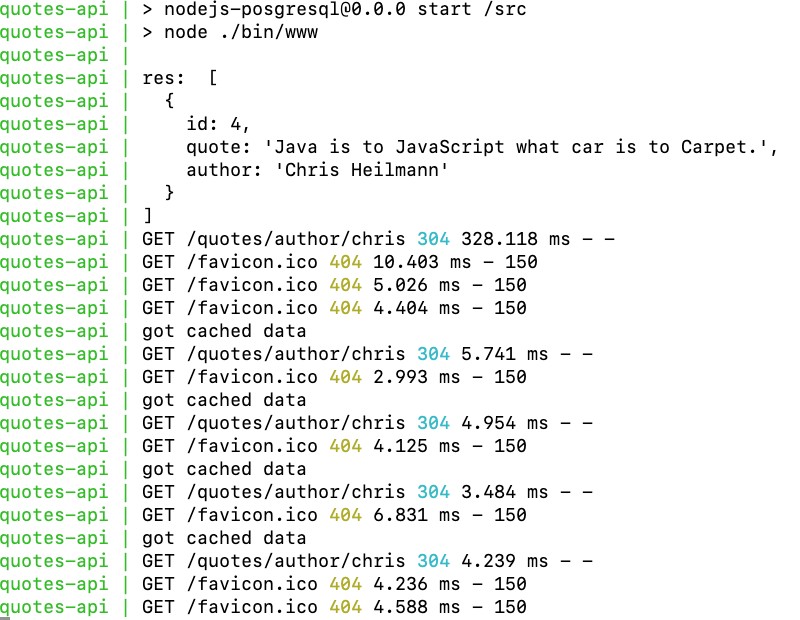

Refresh this page 2-3 times and return to the docker-compose console tab, we should see something similar to the following:

As we can see above, the first hit was to the database and it took 328,118ms to get the citations for author Chris. All subsequent requests got the request from the Redis cache and it was super fast, responding between 5.7 and 3.48ms. As the cached content is there for 10 minutes, if we run

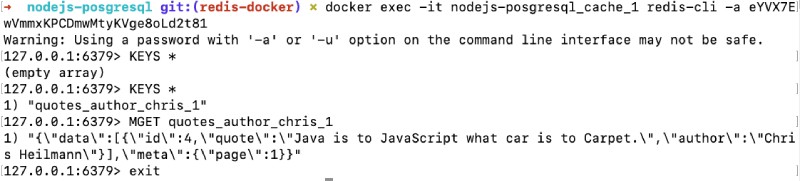

the redis-cli inside the container with the following command:docker exec -it nodejs-posgresql_cache_1 redis-cli -a eYVX7EwVmmxKPCDmwMtyKVge8oLd2t81

Then we can see the contents of the cached key and its value with: KEYS * to list all keys. To list the value of the found key, we can use MGET quotes_author_chris_1 will show us the contents of that particular key as seen below:

The query

The query

cache PostgreSQL will make things faster for subsequent calls, but if there are many (thousands or millions) of rows in the database, then the query cache won’t work as well. That’s where a key-value cache stored in memory like Redis would be a huge performance boost as you can see above. The change made for this tutorial is available as a pull request for your reference.

Conclusion #

We saw how to use Redis with docker and the with docker-compose. We also added Redis as a cache to an existing Node .js API and witnessed the performance benefits.

I hope this helps you understand how to use Redis in any application with docker and docker-compose without any problems.

Keep caching!